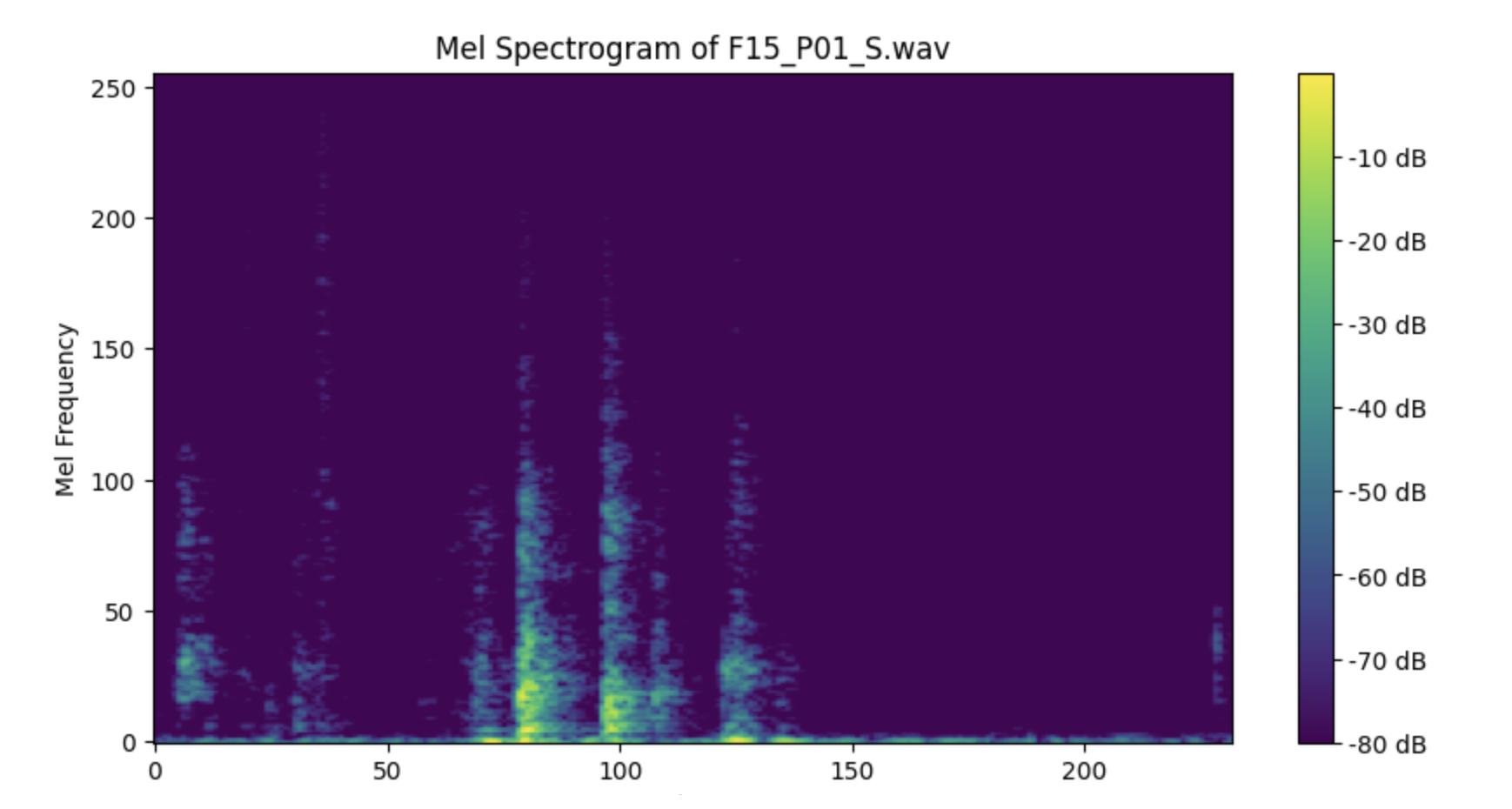

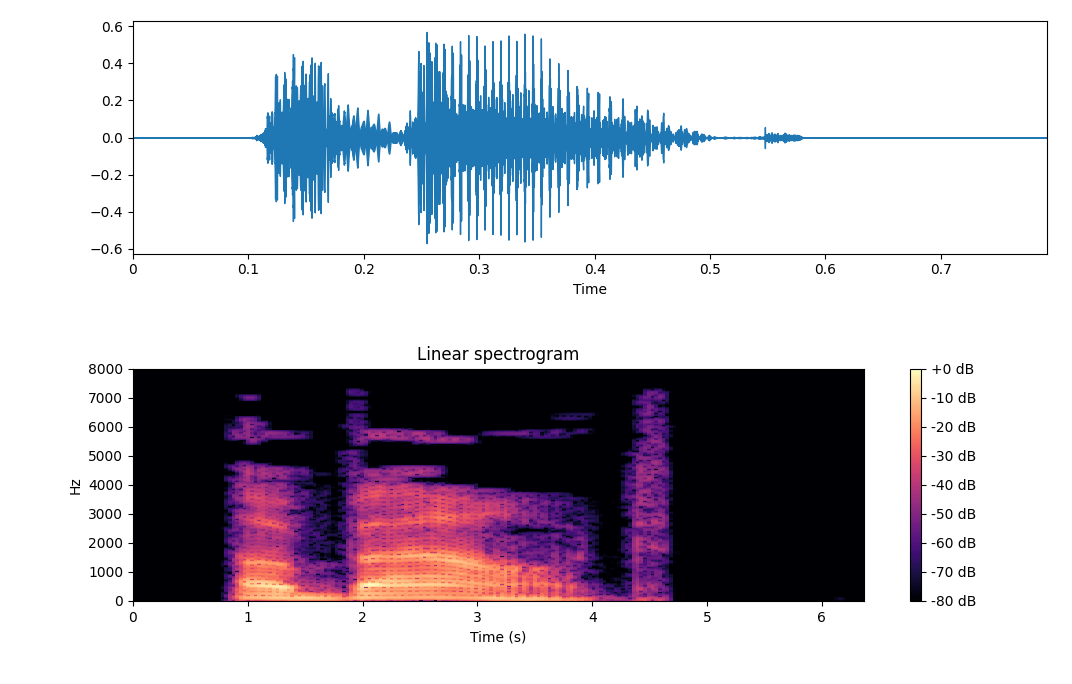

With no publicly available fall audio datasets, we built our own from scratch , recording a 150lb test dummy alongside 7 real human volunteers (ages 20–57) across 3 different microphones (22kHz–192kHz sample rates).

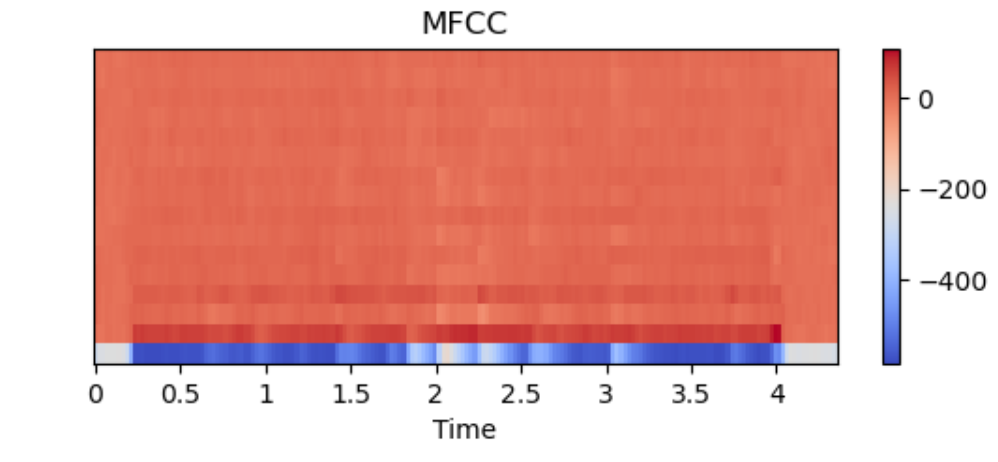

Augmentation techniques: frequency masking, time masking, and time stretching to increase dataset diversity and model robustness.